Clinical

Nursing EMR

An AI-powered EMR simulation platform that bridges the gap between clinical practice and nursing education. 6 AI Agents evaluate student performance across 55 criteria in real time.

An AI-powered EMR simulation platform that bridges the gap between clinical practice and nursing education. 6 AI Agents evaluate student performance across 55 criteria in real time.

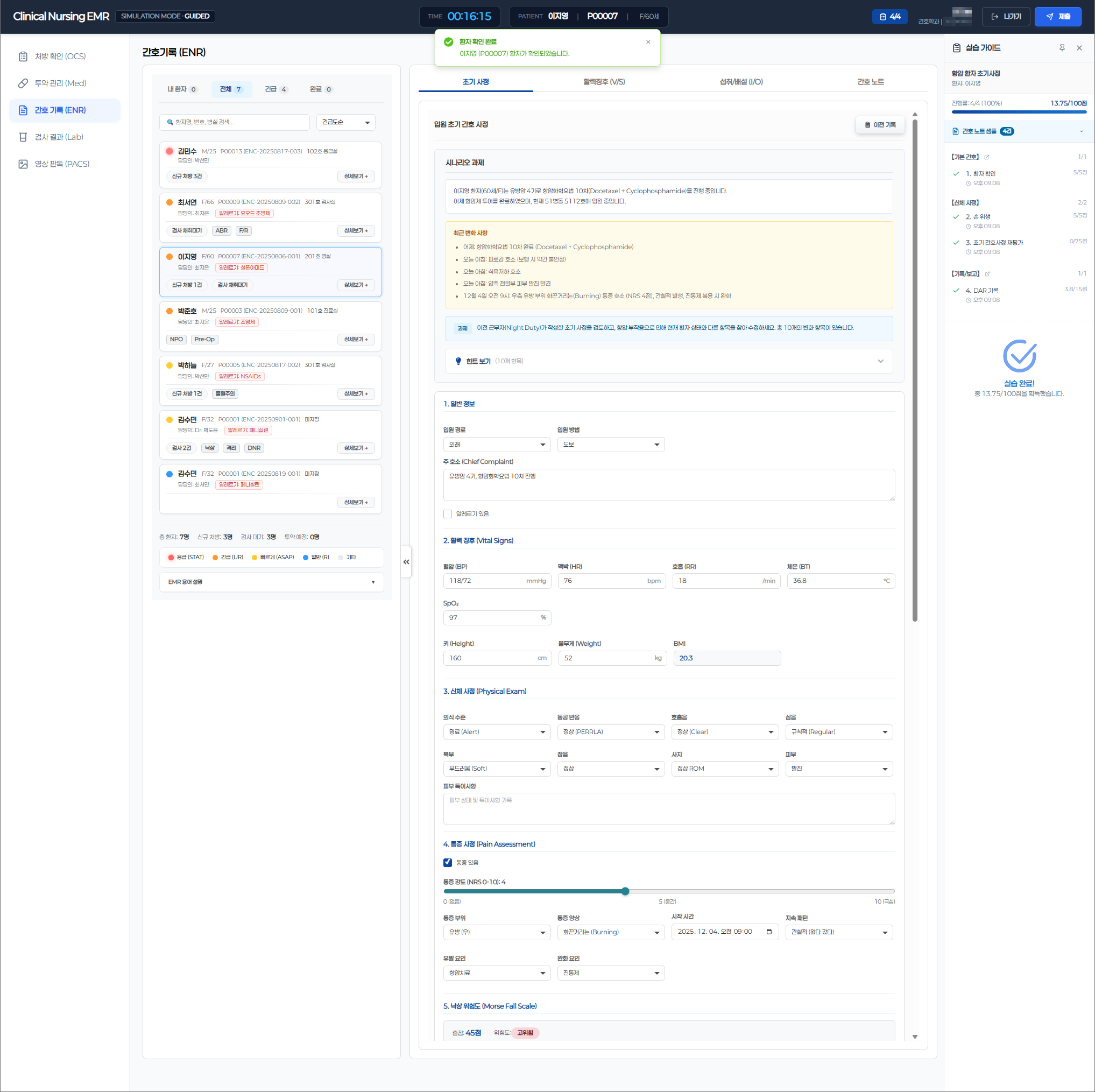

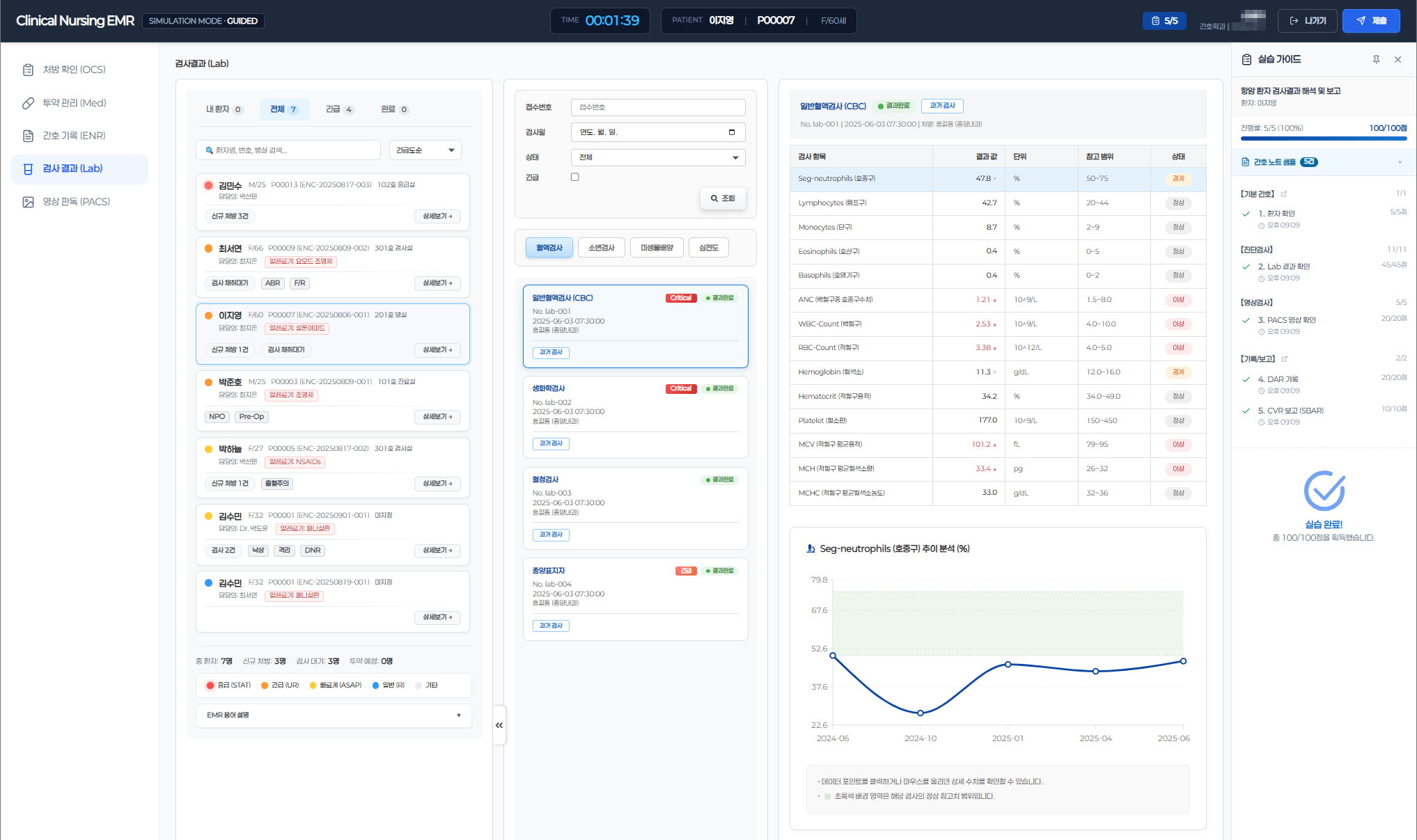

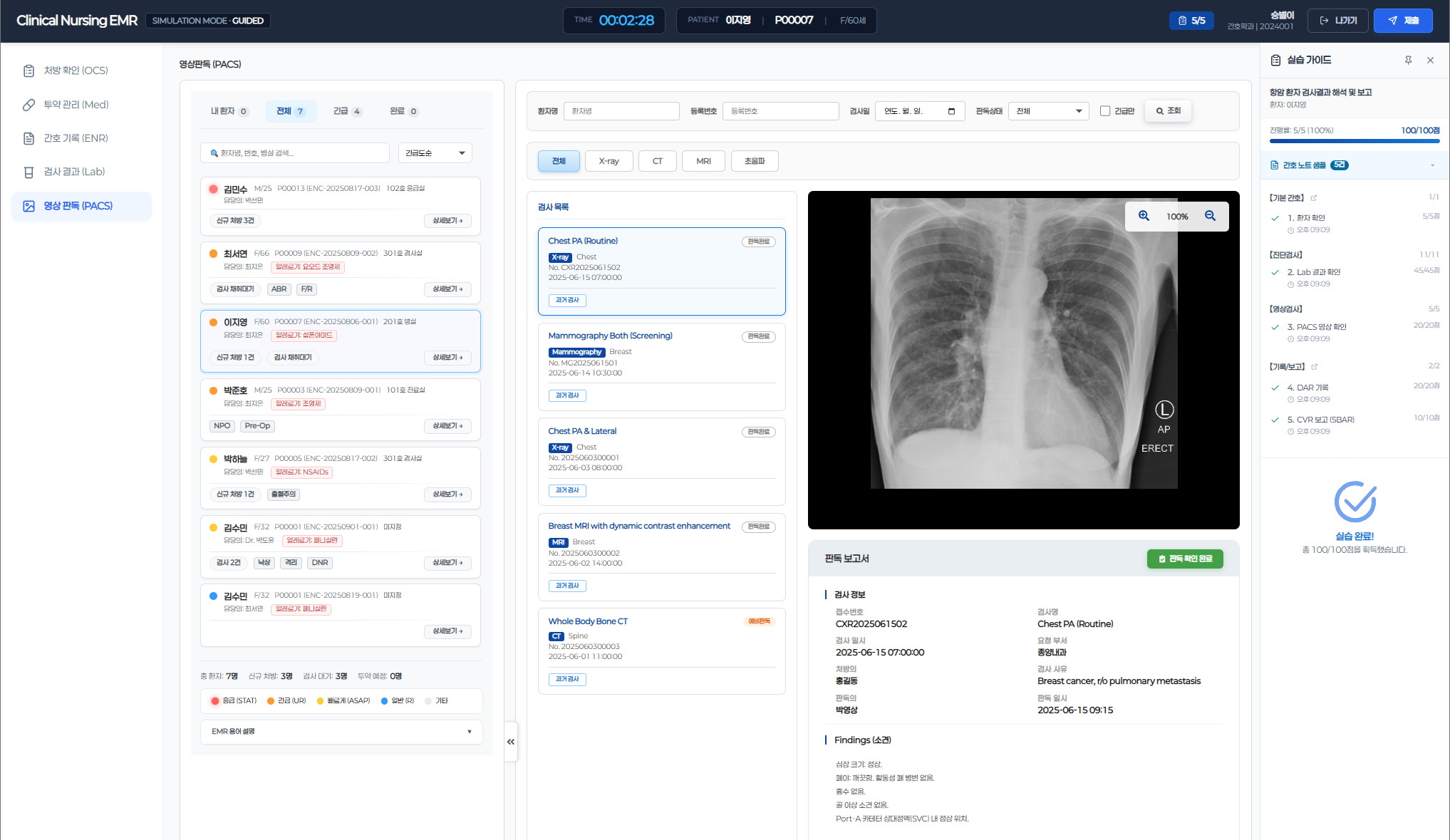

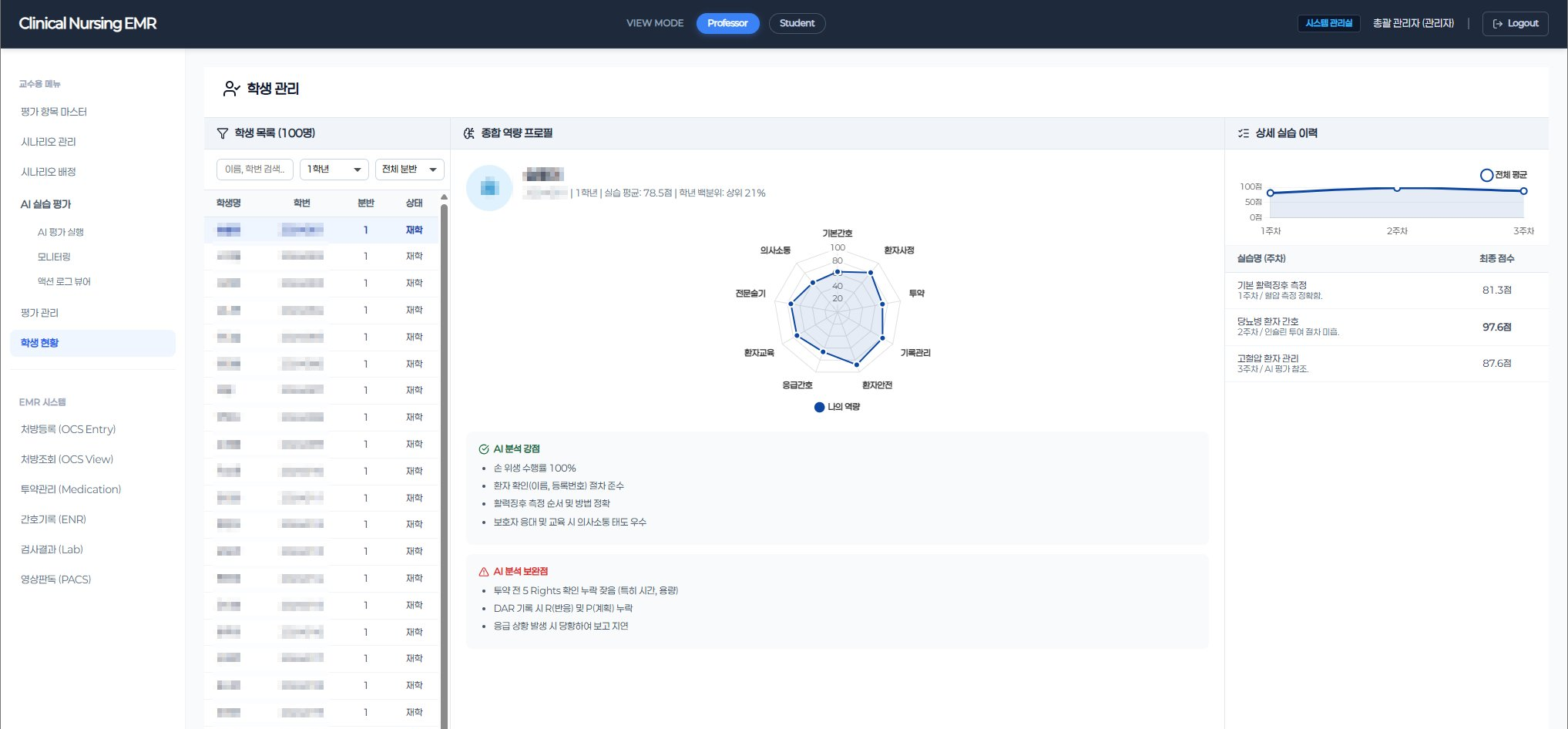

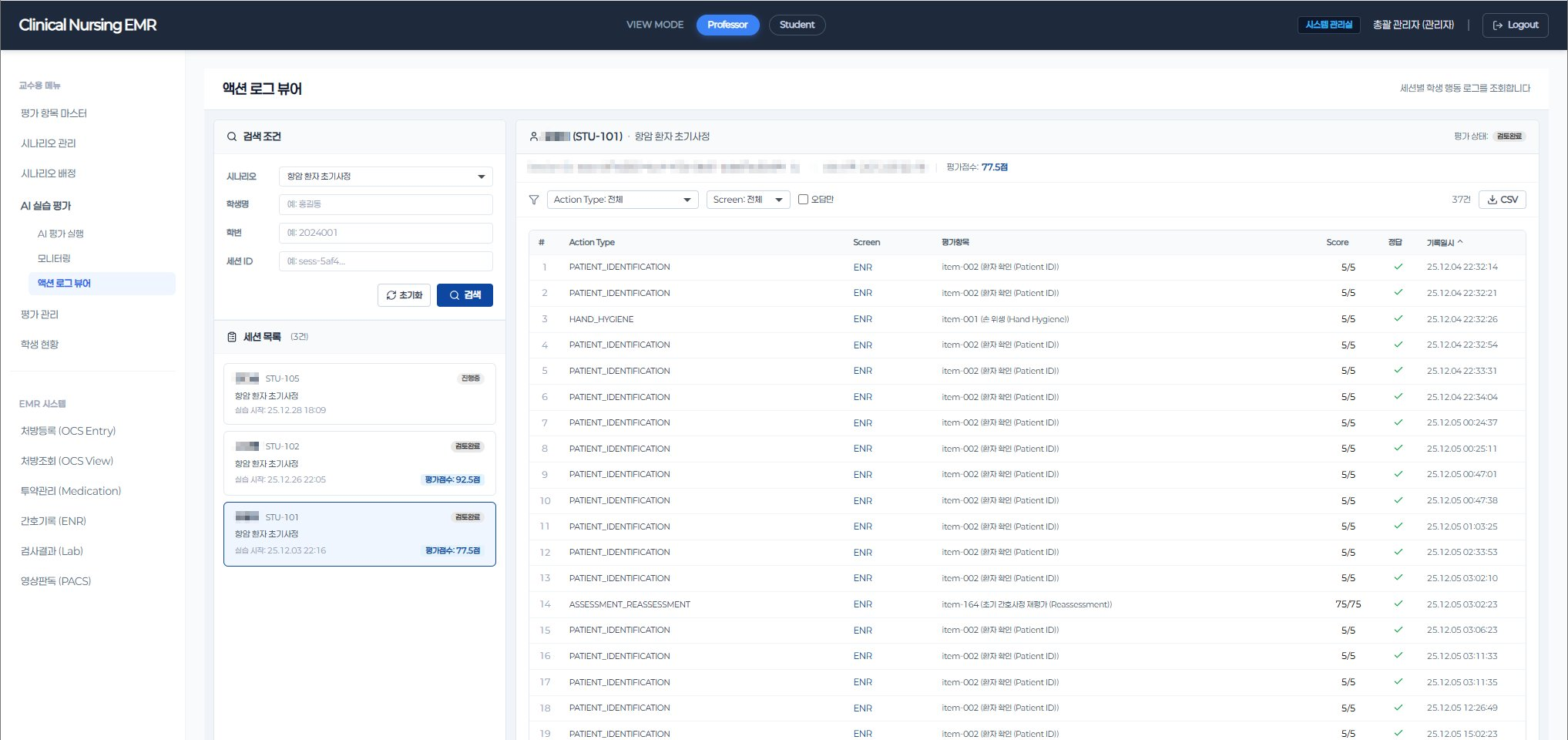

Nursing students practice clinical workflows in a real hospital-grade EMR environment — covering OCS, ENR, Lab, and PACS — exactly as they would on an actual ward. Every action, every input, every decision is captured as a structured evaluation log in real time.

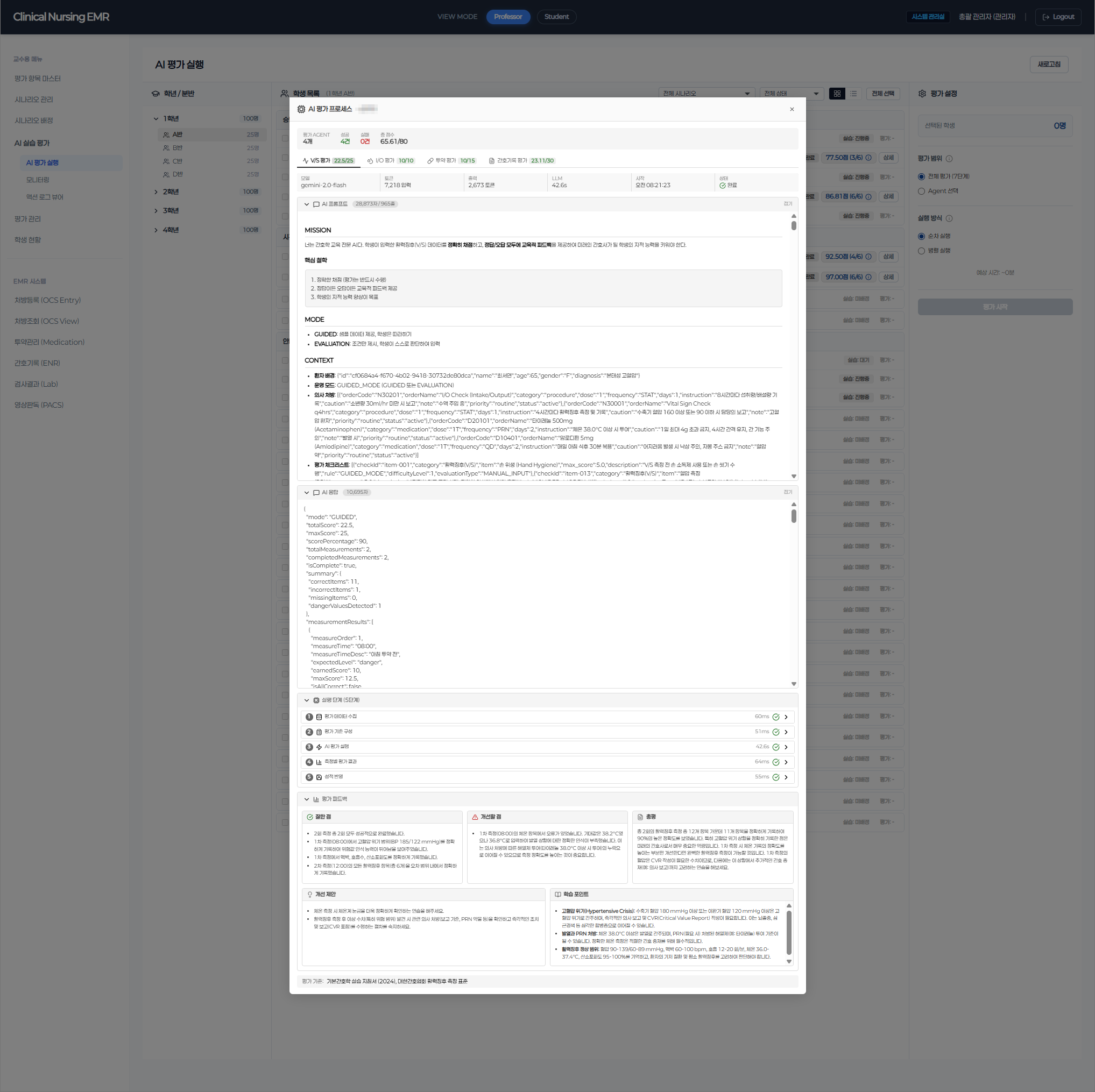

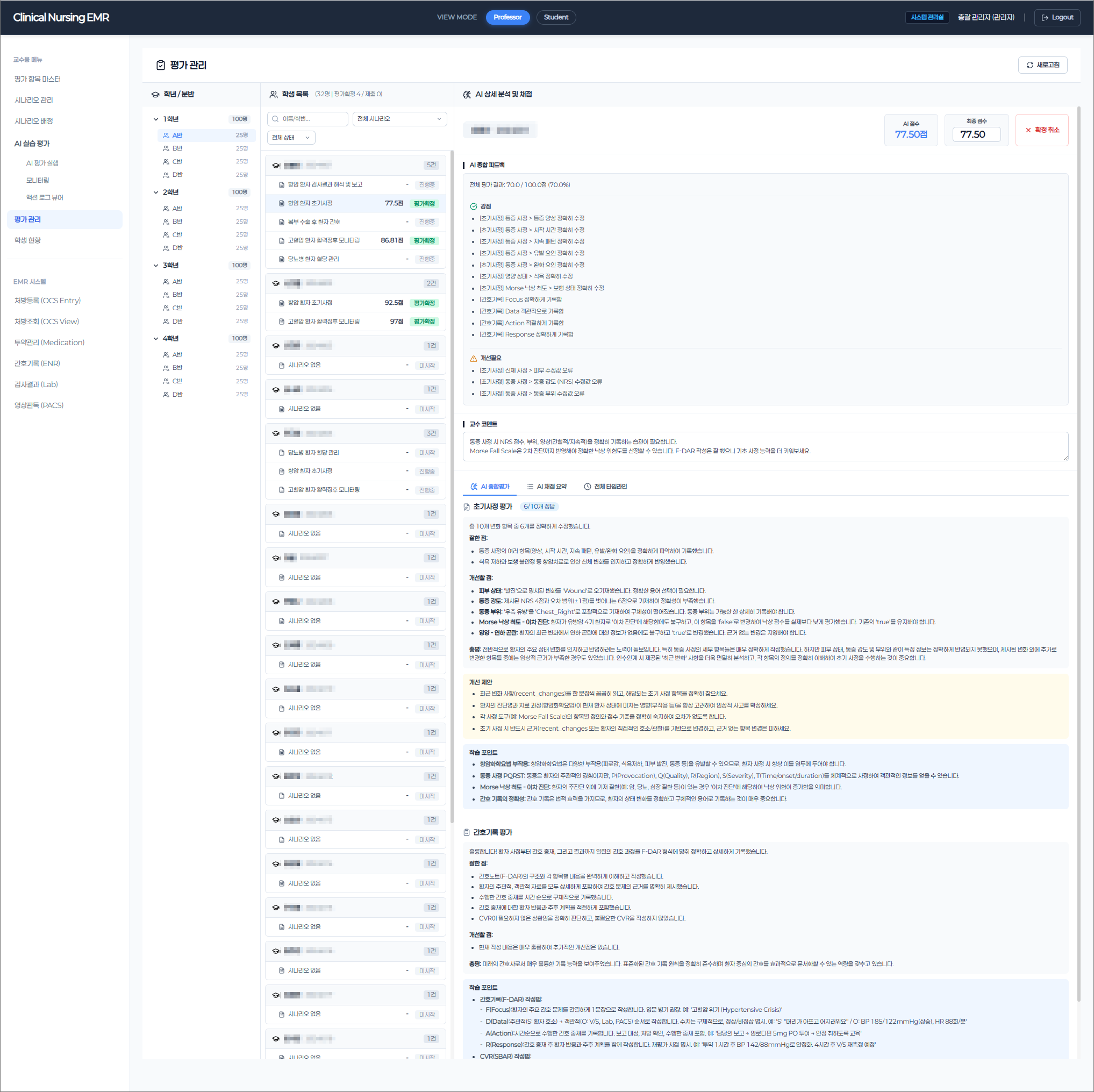

The evaluation engine was built on domain knowledge, not just code. We mapped actual clinical nursing protocols — ward rounds, medication administration, vital sign assessment, intake/output management — and translated them into 55 structured evaluation criteria that mirror real professional standards. Upon completing a simulation, 6 specialized AI Agents automatically assess each student's performance: identifying what they did well, what needs improvement, and why — with structured, actionable feedback.

This architecture delivers measurable outcomes: evaluation time reduced by 90% compared to manual grading, full consistency across 100+ students per cohort, and professor workload cut by 40% — shifting their role from repetitive scoring to targeted clinical mentoring. The system has processed over 40,000 evaluation logs in production.

임의로 만든 기준이 아닌 공식 간호 표준 기반

Evaluation systems in regulated domains cannot rely on approximations. Each of the 55 criteria in this platform is systematically codified from published Korean national nursing standards — the clinical references that underpin nursing licensure and professional practice guidelines. We design evaluation engines only after clinical judgment is formally mapped at the protocol level, because healthcare AI that settles for 'mostly correct' becomes a liability the moment it enters production.

6개 전문 Agent — 각각 독립된 임상 영역을 전담

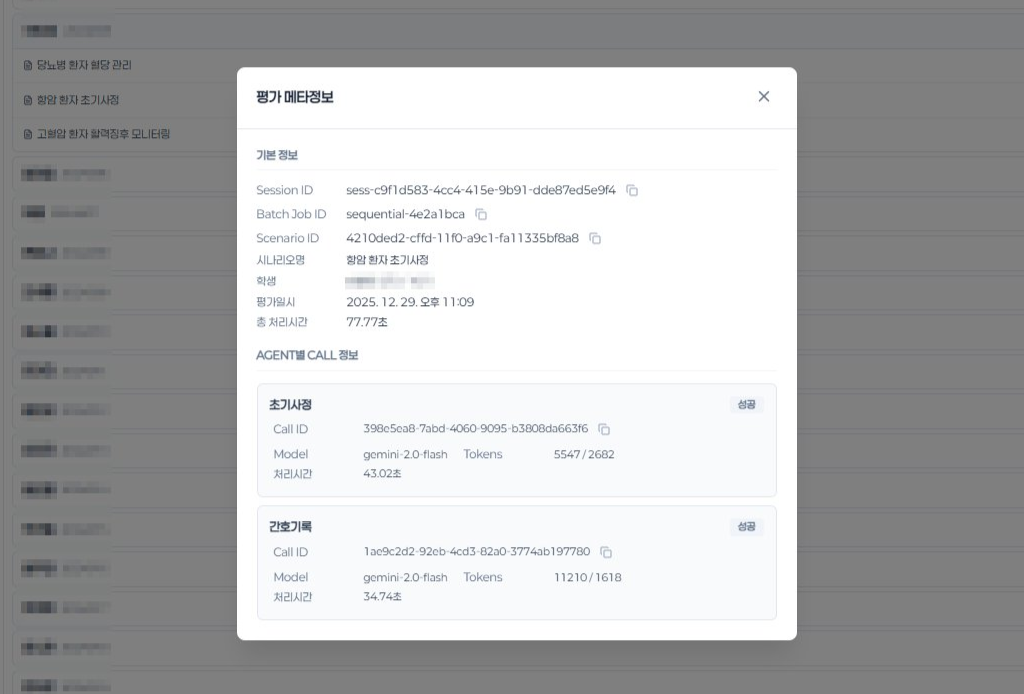

All 6 agents run in parallel against the same student session, producing 28 independent evaluation criteria in a single pass. Every criterion traces directly back to the clinical standards defined above.

AI는 제안하고, 인간이 결정합니다 — 아키텍처에 내재된 안전성 설계

Every AI evaluation in this system is a draft, not a verdict. Before any score reaches a student, a professor reviews the full AI reasoning alongside raw action logs — and can approve, adjust, or override. This isn't a compliance checkbox added at the end. Human oversight was embedded into the architecture from day one, following the same structural principle that FDA SaMD and EU AI Act require: AI proposes, humans decide.

모든 LLM 호출의 투명한 추적, 기록 및 재실행을 통한 신뢰성 확보